One thing that really gets our goat is bad data.

Data that fails to tell the right story, or provide valid and reliable evidence, can leave school leaders bewildered rather than informed.

Nicki has recently served as a Trustee and Chair of Governors during inspections under the new Ofsted framework, seeing firsthand how these changes play out in practice. The shift toward a prescriptive “secure fit” model can create tension and anxiety for school leaders. Even when a school is performing well overall, a single outlier in data, attendance, or behaviour can disproportionately influence judgements if all descriptors must be met.

This raises an important question: are we measuring quality — or are we measuring compliance?

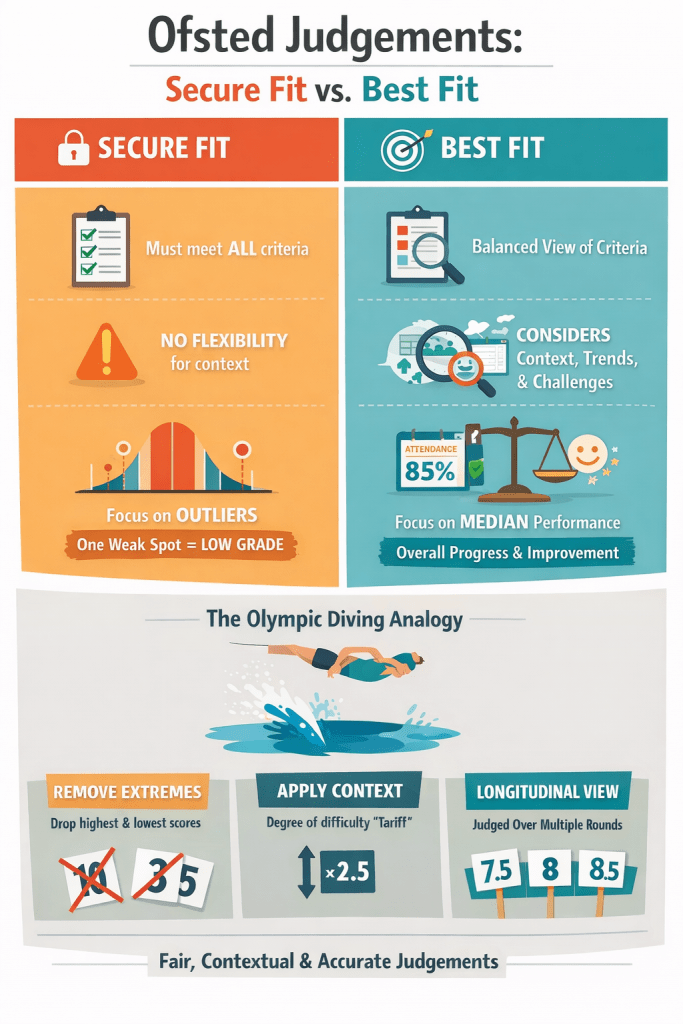

A recent article in TES — Headteachers worried about new Ofsted inspection grading — that explored concerns around the new Ofsted inspection methodology. The piece highlighted two possible approaches inspectors might take:

- Secure fit: Every descriptor in the Ofsted judgement must be fully met for a school to earn that overall judgement.

- Best fit: Not every descriptor needs to be met. Inspectors can apply professional judgement and discretion to determine the overall judgement.

At first glance, “secure fit” seems rigorous and objective. Yet, in practice, it risks punishing schools for minor anomalies or “outliers” that don’t reflect the broader picture of school performance.

And this is where data distribution becomes helpful.

What school data actually looks like

In our experience on governing boards, schools’ performance data often forms a normal distribution. Some subjects or year groups overperform or underperform due to context — small cohorts, demographic variations, recruitment challenges, or new leadership. These are the outliers.

During inspections, we’ve seen secure fit approaches penalise schools for these isolated dips, even when the overall trajectory is positive.

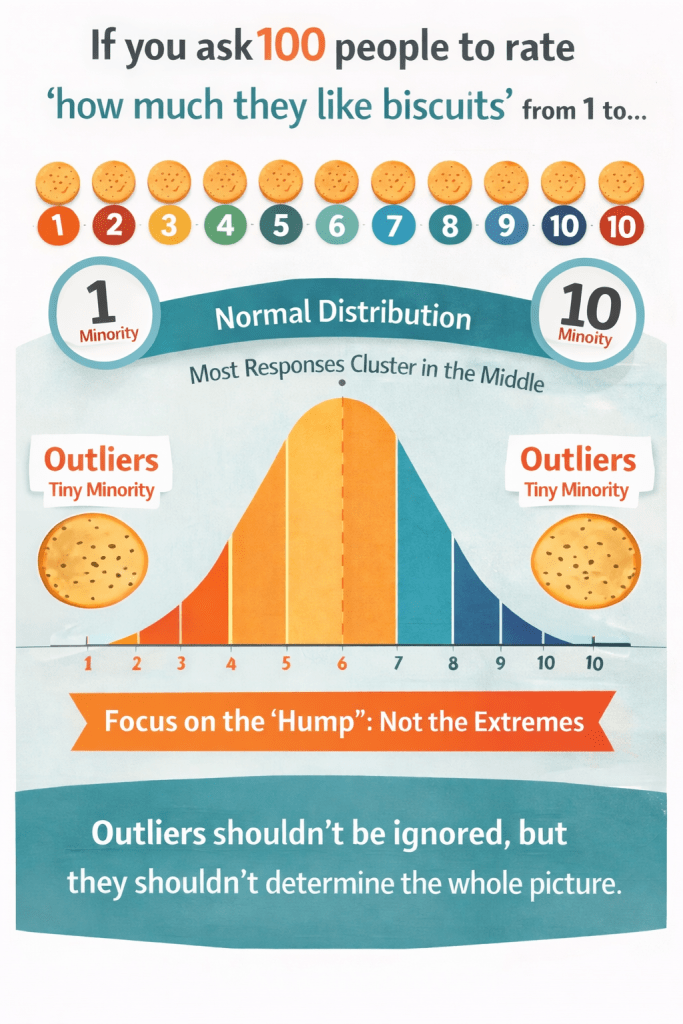

It reminded us of a cautionary tale if you ask 100 people to rate “how much they like biscuits” from 1 to 10, you’ll get a bell-shaped curve — most responses cluster in the middle, while a tiny minority give extreme scores of 1 or 10. Those extremes are outliers. They shouldn’t be ignored entirely, but neither should they disproportionately shape the overall picture.

So the question becomes: should the outlier determine the judgement?

Lessons from Olympic diving

Consider Olympic diving. Historically, every judge’s score counted — including outliers — and performances were averaged. That often led to odd and unfair results. The system was revised: the two highest and two lowest scores were discarded, and the median scores multiplied by a degree-of-difficulty “tariff.” This method accounted for context and longitudinal performance, giving a fairer and more accurate result.

Why can’t school data be treated the same way? So what might that look like in inspection?

Attendance, behaviour, and school performance

We know that attendance and behaviour are tightly linked. Low attendance often correlates with behaviour challenges and lower attainment. During inspections, it’s important to see the whole picture, not just a snapshot. Initiatives like the RISE attendance and behaviour hubs show that improving attendance often positively impacts behaviour, and vice versa.

In governance, we’ve seen schools implement excellent strategies to tackle attendance issues. Yet, under secure fit inspections, temporary attendance dips — sometimes caused by factors outside the school’s control — can disproportionately affect overall judgements. A best fit model allows inspectors to recognise contextual factors and longitudinal improvement trends, rather than penalising the school for one-off outliers.

This moves the focus from “Is it perfect?” to “Is it improving with intent and impact?”

Context, trends, and longitudinal insights

A secure fit model risks reducing inspections to a moment-in-time checklist, ignoring improvement over time. From my perspective as a Trustee, it’s far more meaningful to see trends:

- Has attendance improved over three years?

- Are behaviour incidents steadily declining due to a consistent policy?

- Are underperforming subjects showing clear evidence of progress?

These longitudinal insights allow trustees, governors and inspectors to tell the real story, rather than be misled by a single weak data point.

Applying “best fit” in practice

A best fit inspection approach allows:

- Focus on typicality

- Contextualisation of attendance and behaviour challenges

- Longitudinal evaluation of trends and school improvement

- Recognition that temporary dips or outliers don’t define overall quality

This aligns more closely with real-life experience as a trustee or chair of governors: you’re judging the school as a whole, not just isolated incidents.

Fair? Accurate? Actionable?

Schools don’t resist accountability — they want fair, accurate, and actionable judgements. A rigid secure fit framework may promise objectivity, but it often obscures the nuanced reality of school life.

Having seen inspections under the new framework, we’re convinced that adopting a best fit approach, enriched with context and longitudinal data, reflects the true quality of a school. It’s fairer for staff, more reflective for governors, and most importantly, more accurate in highlighting areas of excellence and genuine improvement.

After all, the goal isn’t just to tick boxes — it’s to recognise achievement, identify areas for growth, and support schools on their improvement journey.

Call to action

If you are a governor, trustee or senior leader:

- How has the shift toward secure fit affected your inspection experience?

- Have you seen outliers disproportionately shape judgements?

- What would a fairer operational model look like in your context?

This is a conversation worth having — not to dilute accountability, but to strengthen it.

If this resonates with you, share your experience, continue the dialogue, and challenge us all to think more carefully about how we measure what matters.

Because schools deserve judgements that are fair, contextual and genuinely reflective of their journey.

This blog was created by Simon and Nicki Antwis, Directors at Antwis Collaborative.

The Leadership Lens aims to offer a moment of stillness amid the storm—a regular space to consider not only how we lead, but why we lead.

Follow @Antwis Collaborative for updates, and let The Leadership Lens help illuminate your leadership path—one reflection at a time.

Leave a comment